Predictive maintenance of ATMs is a crucial aspect of cash management for banks and ATM operators, as it is among the most important factors that contribute to ATM downtime. As the ATMs that are most frequently used are most likely to degrade and eventually malfunction, potentially leading to customer dissatisfaction, predictive maintenance is an important aspect of achieving a satisfactory level of service in ATM management. A method that accurately forecasts potential malfunctions can help prevent such interruptions to service by enabling well-timed preemptive maintenance interventions. In this post, we will be sharing our algorithmic approach to this difficult yet central forecasting problem.

Our approach is based on using the continuously monitored internal operating system (XFS) signals to predict potentially fatal failures on an ATM. At this level, we therefore cast the problem as a binary prediction problem, and attempt to predict any noticeable interruptions to customer service by any ATM. This analytical task comes with its challenges, for example:

- The high-dimensional, high-frequency, and noisy nature of the incoming signals

- Multiple causes of ATM malfunction, potentially being preceded by different patterns of XFS signals

- Each ATM having different use frequencies and ages, all affecting the observed patterns of evidence and failure

- Relationship of the malfunctions with external factors such as special calendar events or day of the week

- Absence or rarity of malfunctions, leading to lack of sufficient training data

In response, we developed a hybrid deep learning architecture that allows the most efficient use of the available data to forecast a potential failure. Firstly, as our customer’s intervention frequency was daily, they could make use of at most a prediction in this frequency. We therefore conducted a daily binary resampling of the XFS signals, and set out to predict the probability of a malfunction in one day / one week as our target. Moreover, since our clients own and operate frequently used ATMs, we had a fairly large sample of ATM malfunction cases to base our training on without needing to rely on undersampling, oversampling, or other data augmentation.

In our method, we wanted exploit the fact that we have multiple ATMs that potentially have similar patterns of signal and malfunction. However, it is also important to accommodate the fact that different ATMs have different likelihoods or patterns of malfunction, based on their age and frequency of use. Moreover, our method needed to recognize various longitudinal patterns that lead to ATM failure, in a stream of signals that can be very noisy.

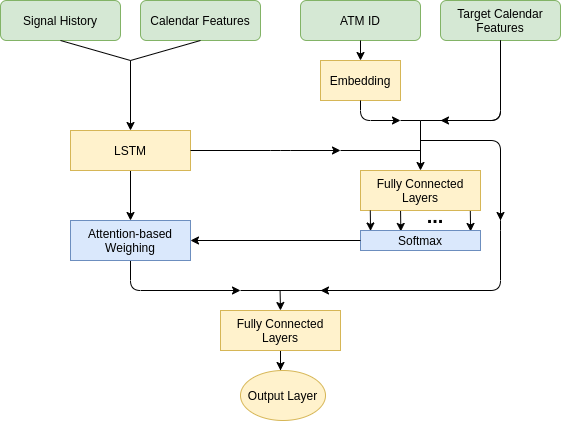

Based on these factors, the algorithm we came up with processes longitudinal signal data with recurrent hidden units along with calendar features, which allows the recognition of temporal patterns in signals. The outputs of this layer is followed by an attention layer, and the input of this attention layer is the recurrent latent outputs as well as ATM ID and target days’ calendar features: such that different patterns that foretell malfunction can be discerned according to type of the ATM or the nature of the day(s) in consideration. Whereas calendar features are encoded in binary fashion, ATM IDs are first processed by an embedding layer, where a dense lower-dimensional representation is produced for each ATM – which can also be reused in other downstream analytical tasks such as for creating management or forecast clusters for ATMs. Finally, the output of the weighted temporal patterns and the external features themselves are concatenated and inputted to fully connected hidden layers to obtain a prediction. A figure that summarizes this architecture can be seen below. This prediction can be interpreted as the probability of malfunction for the given ATM in a predetermined period, and necessary actions can be mobilized accordingly.

Contact us to learn more about our innovative solutions for various aspects of predictive cash management, including Idle Cash and CIT Routing Optimization.

Leave a Reply